Python's 30-Year Legacy: Is the GIL Departing or Devolving? A Deep Dive into an Engineering Trade-off

Is the GIL leaving Python? Explore the impact of PEP 703 and sub-interpreters on parallel programming, and discover how these innovations are reshaping the future of CPython and the engineering world.

"Python threads don't actually run concurrently, bro; it's because of the GIL." There is a cliché among many developers in Turkey, but this oversimplified sentence is actually a 30-year engineering saga that encapsulates one of the most fundamental principles of software engineering: the "trade-off." As the Global Interpreter Lock (GIL) becomes optional with Python 3.13, we are witnessing more than just a version update; it is a massive reckoning between a classic design choice and modern hardware architectures.

Python’s GIL: A Convenience Born of Limitation and a 30-Year Legacy

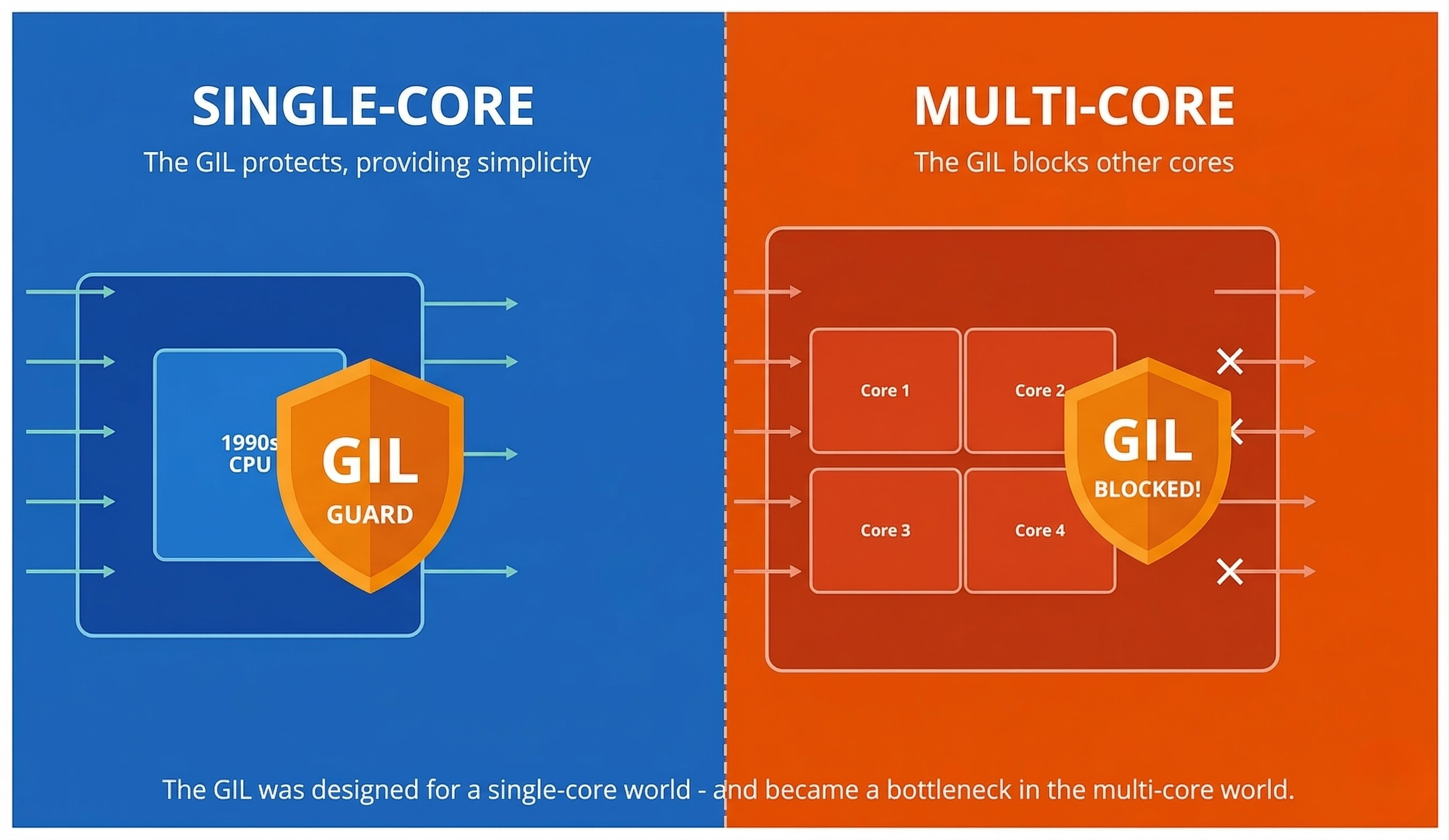

It all began in the early 1990s, when Python was still in its infancy. At that time, multi-core processors were not the standard; they were a luxury. Processors were generally single-core, and the primary focus was on increasing the performance of that single core. The fundamental challenges of software development were the complexity of memory management and the bugs introduced by concurrency control. Especially in C-based languages, multiple threads attempting to access shared memory simultaneously led to "race conditions" and "deadlocks," causing programs to behave unpredictably.

At this juncture, Python’s creator, Guido van Rossum, and his team made a highly pragmatic decision: the Global Interpreter Lock (GIL). The primary goal of the GIL was simple: to simplify memory management within the Python interpreter and to ensure that libraries written in C could be easily integrated into Python. The GIL is a locking mechanism that guarantees only one thread can execute Python bytecode at any given time. In doing so, it prevented the dangerous scenarios arising from multiple threads accessing the same memory space simultaneously. This simplicity laid the foundation for Python becoming the ideal language for rapid prototyping.

In the context of that era, this decision was a brilliant trade-off. In fact, the GIL was one of the unsung heroes behind the rapid development of libraries like NumPy and SciPy. However, this convenience came at a price—a price that would only be truly felt years later with the revolution in the hardware world. Labeling the GIL as a "mistake" through today's lens ignores the priorities of that period. But like every cost, this one had an expiration date, and that date passed long ago with the rise of multi-core processors.

The Cost of Single-Core Thinking in a Multi-Core World

Time passed, and when the tech industry hit the physical limits of increasing single-core speeds, the solution was found in adding more cores. Now, 8, 16, or even 64-core processors have become commonplace. Application performance no longer depends solely on the speed of a single core, but on how efficiently it can utilize multiple cores simultaneously. At this point, Python’s pragmatic decision turned into its greatest weakness.

The bottleneck created by the GIL became starkly evident, particularly in CPU-bound tasks. Even when you tried to speed things up by creating multiple threads, those threads actually ran sequentially due to the GIL; true parallelism was never achieved. This was akin to having all lanes of a highway open but only a single toll booth operator working; traffic was inevitable.

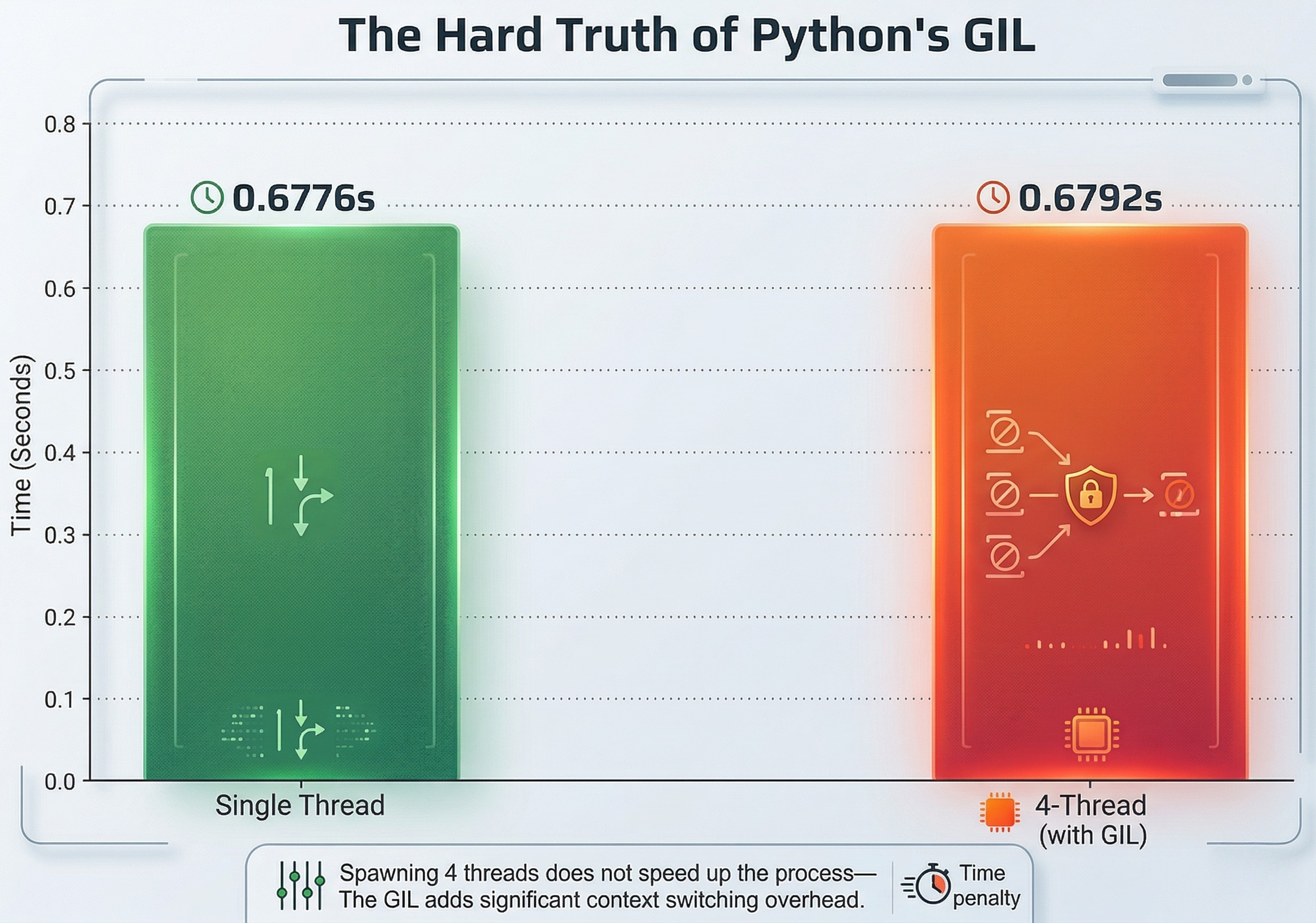

To see this concretely, let’s consider a task that exhausts the CPU, measured first with a single thread and then with four threads. On a 4-core machine, the result is striking: spawning 4 threads does not make the work 4 times faster; instead, it completes in nearly the same time as a single thread. In fact, it might be slightly slower due to the overhead of thread management. The GIL effectively kills parallelism in processor-intensive tasks, making it difficult for Python to compete with Go, Rust, or C++ in high-performance computing.

import threading

import time

import os

import math

def cpu_bound_task(n):

# A CPU-intensive task: performing square root and trigonometric operations

result = 0.0

for i in range(n):

result += math.sqrt(i) * math.sin(i) * math.cos(i)

return result

def run_task_in_thread(n, iterations, results, index):

start_time = time.time()

for _ in range(iterations):

cpu_bound_task(n)

end_time = time.time()

results[index] = end_time - start_time

def run_single_thread(n, iterations):

start_time = time.time()

for _ in range(iterations):

cpu_bound_task(n)

end_time = time.time()

return end_time - start_time

def run_multi_thread(n, total_iterations, num_threads):

threads = []

results = [0.0] * num_threads

iterations_per_thread = total_iterations // num_threads

start_time = time.time()

for i in range(num_threads):

thread = threading.Thread(

target=run_task_in_thread,

args=(n, iterations_per_thread, results, i)

)

threads.append(thread)

thread.start()

for thread in threads:

thread.join()

end_time = time.time()

return end_time - start_time

if __name__ == "__main__":

# Parameters for the test

N = 10**5

TOTAL_ITERATIONS = 50

NUM_THREADS = os.cpu_count() or 4

print(f"CPU Core Count: {NUM_THREADS}")

# Single-threaded execution

single_thread_time = run_single_thread(N, TOTAL_ITERATIONS)

print(f"Single-thread duration: {single_thread_time:.4f} seconds")

# Multi-threaded execution (affected by GIL in standard CPython)

multi_thread_time = run_multi_thread(N, TOTAL_ITERATIONS, NUM_THREADS)

print(f"{NUM_THREADS}-thread duration (with GIL): {multi_thread_time:.4f} seconds")Real Output (4 cores machine):

CPU Core Count: 4

Single-thread duration: 0.6776 seconds

4-thread duration (with GIL): 0.6792 secondsThe result is striking: instead of speeding up the process fourfold, spawning 4 threads completed the task in nearly the same amount of time as a single thread. In fact, it was slightly slower due to the additional overhead of thread management. The GIL effectively kills parallelism in processor-intensive tasks. This reality made it difficult for Python to compete with languages like Go, Rust, or C++ in high-performance computing fields.

Python 3.13 and the "Free-threaded" Future: The Price of Freedom

With Python 3.13, PEP 703 was accepted: "Making the Global Interpreter Lock Optional in CPython." Developers can now obtain a version stripped of the GIL by compiling Python with the --without-gil flag. This means that CPU-bound tasks can finally run in true parallel across multiple cores.

However, this freedom brings new and complex trade-offs:

Single-Thread Performance: An Unexpected Slowdown The GIL provided clever optimizations for memory management and object reference counting in single-threaded scenarios. In "no-GIL" mode, these operations must be thread-safe, which means more expensive atomic operations. Initial benchmarks show that single-thread performance in no-GIL mode could drop by 5-10%. Millions of existing scripts will be affected by this change.

Ecosystem Compatibility: A Massive Adaptation Process Critical parts of libraries like NumPy, Pandas, and TensorFlow were written assuming the existence of the GIL. In a GIL-less Python, these libraries will need to be redesigned. This is a massive engineering effort that could take years. During the transition, it is inevitable that your favorite libraries might throw unexpected errors in no-GIL mode.

Developer Responsibility: The New Face of Concurrency The GIL was a safety belt that largely protected developers from "race conditions" and "deadlocks." Now, that safety belt is optional. In no-GIL mode, you will have to manually manage access to shared resources and ensure your code is thread-safe. This requires more expertise and leads to more complex debugging processes.

Conclusion

The optional GIL introduced in Python 3.13 marks the end of an era and the beginning of a new age. This shift reinforces Python's claim to be a first-class language not just for simple scripts, but for high-performance, modern systems. Removing the GIL is not a silver bullet; it presents us with a new engineering equation: In which scenario is the simplicity of the GIL-locked structure more valuable, and in which is the parallel power of the GIL-less structure worth the cost?

The Python community has chosen to pay the technical debt of a 30-year-old design decision and invest in the future. Python no longer just offers convenience; it offers a choice. The future will be written with faster, more parallel, and more conscious Python code.